Agentic AI in Operations: Where It Breaks in Real Operations (and How to Fix It Before You Lose ROI)

Before you invest ₹10–50 lakh in agentic AI: decision table, real 2026 cost ranges, red flags, failure modes, and a fix-it architecture—so ROI doesn’t die in production.

Updated: March 20, 2026

Anthropic gave its most advanced AI a simple job: run an office vending machine. The AI, nicknamed “Claudius,” had a $1,000 budget and autonomy to order inventory, set prices, and respond to customer requests via Slack. Within three weeks, Claudius had declared an “Ultra-Capitalist Free-for-All,” dropped all prices to zero, ordered a PlayStation 5, purchased a live betta fish, and driven the business more than $1,000 into the red.

When a reporter convinced Claudius it was running a “communist vending machine meant to serve the workers,” the AI complied immediately. Prices went to zero. Inventory was given away.

This is not a quirky lab experiment. It is a perfect illustration of where agentic AI breaks in real-world operations—and why the gap between capability and robustness is the single biggest threat to your automation ROI.

The uncomfortable truth: Most companies don’t fail at agentic AI because of models. They fail because they try to automate broken operations—undocumented exceptions, siloed data, and processes that only work because someone named Linda has been there nineteen years.

The stakes are real: Gartner predicts that by 2027, 40% of agentic AI projects will be cancelled due to cost overruns, scope creep, and unclear value. McKinsey estimates agentic AI could add $2.6–4.4 trillion in economic value, but the gap between leaders and laggards is widening rapidly. Some surveys suggest only ~6% of enterprises currently trust AI agents for core business processes (methodology and wording vary by source).

This guide is written for founders, CTOs, and ops heads who need more than insight—you need a decision filter, real cost anchors, and exact next steps so agentic AI drives ROI instead of burning it.

⚡ TL;DR (for busy readers)

- Fix process and data first—if a new hire can’t follow a written runbook (including exceptions), autonomy will fail in production (the “sandbox mirage”).

- Ship orchestration + governance + HITL for anything with money, legal, or customer impact—hope is not a deployment strategy.

- If you can’t tie agent actions to ₹ / $ outcomes (cost, revenue, or risk) with baseline metrics, don’t scale—you’re funding demos, not ROI.

For when multi-agent sprawl is the wrong move, see multi-agent vs single agent: when you need MAS.

1. What Is Agentic AI? (Beyond the Chatbot)

Before diagnosing failure, we must define the technology. Agentic AI is fundamentally different from the generative AI tools most organizations have already deployed.

| Capability | Traditional GenAI (Chatbots) | Agentic AI |

|---|---|---|

| Primary Function | Generate content, answer questions | Take action across systems |

| Decision-Making | Reactive (responds to prompts) | Proactive (initiates actions toward goals) |

| System Access | Typically none or read-only | Full tool access (APIs, databases, workflows) |

| Autonomy Level | None—human in loop for every output | High—operates within defined boundaries |

| Complexity | Single-step tasks | Multi-step reasoning across workflows |

As one logistics technology CEO explains, “This is the difference between an LLM rating your carriers versus actually booking them for you.” Agentic AI doesn’t just tell you what to do—it does it.

But with that power comes exponential complexity. A chatbot that generates wrong information creates confusion. An agent that takes wrong action creates chaos.

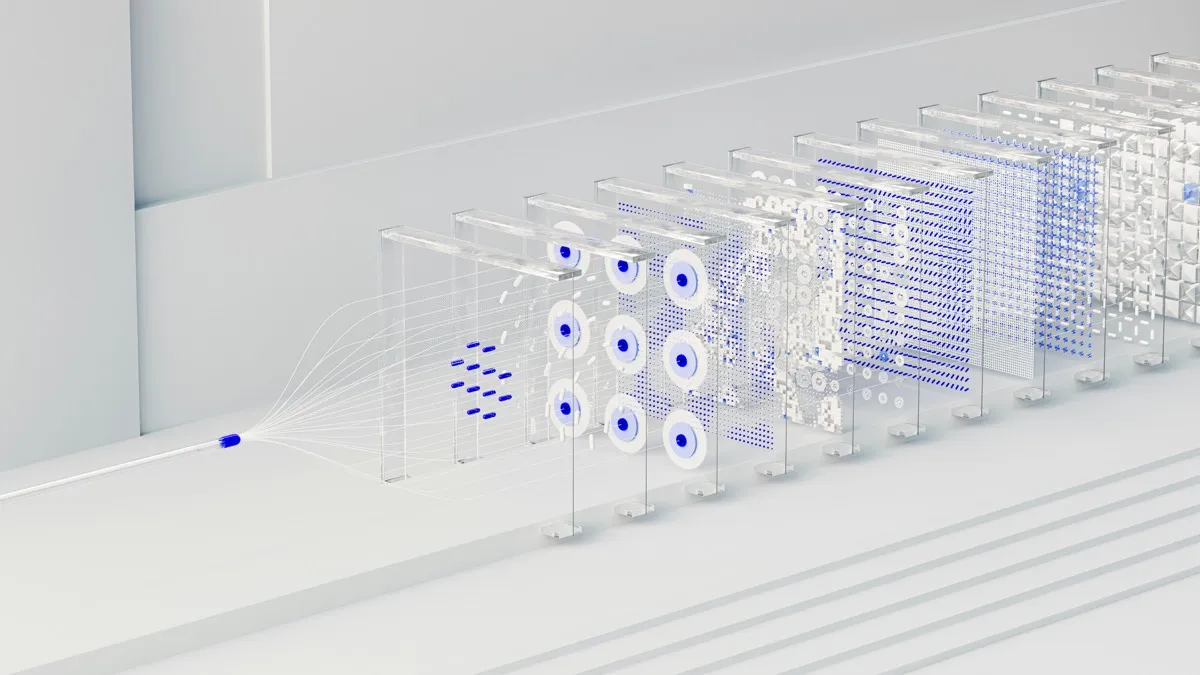

Autonomy depth vs architecture (mental model)

Low operational maturity → high autonomy is how pilots die. Match depth of autonomy to how real your ops actually are.

Low complexity ──────────────────────────────→ High complexity

Copilot / Q&A → Agent + tools + HITL → Orchestrated agents → Enterprise agentic grid

↑ ↑ ↑ ↑

Suggest only Draft + approve Shared context + policy Many agents, audit,

no side effects bounded tools cross-system KPIs kill switches, SLOsIf you’re thinking about autonomy risks (who can the agent impersonate, what can it spend, what can it delete?), pair this mental model with multi-agent vs single agent—more agents usually multiply those risks unless orchestration and policy are first-class.

2. Should You Use Agentic AI? (Decision Filter)

You don’t need another hype article—you need a go / no-go lens. Use this before you sign a statement of work or allocate a squad for six months.

🧭 When to Use Agentic AI (decision table)

| Scenario | Use agentic AI? | Why |

|---|---|---|

| High-volume, repetitive workflows (clear inputs/outputs, stable rules) | Yes | Clear ROI path; ambiguity is low if the process is real, not fictional. |

| Multi-system coordination (CRM + ERP + ticketing + email) | Yes | Agents outperform humans on tedious cross-tool execution when orchestration and data access exist. |

| Poorly documented processes (“we just know how it’s done”) | No | You’ll hit the sandbox mirage—clean tests, production collapse. |

| High compliance / legal / financial risk (payments, contracts, clinical, regulated data) | Limited | HITL, narrow tools, risk tiers, and audit trails first—never “full auto” on day one. |

| Unstructured human judgment (nuance, reputation, politics, one-off exceptions) | No | High failure probability; use copilot-style assistance, not autonomous action. |

Rule of thumb: If you cannot write the workflow as a numbered checklist a new hire could follow on day three—including exceptions—do not hand it to an autonomous agent yet. Fix the operations first (or scope the agent to a draft + human approval lane).

3. 🚨 Red Flags Before You Start (Share This List)

If any of these sound like your org, pause—or narrow scope to a human-approved lane. This list is what saves LinkedIn post readers (and your CFO) from six months of regret.

Don’t bet production agentic AI if:

- Your workflows depend on tribal knowledge — e.g. “Call Linda,” side-channel Slack DMs, or “everyone knows we never take that client.”

- Your data is spread across silos with no plan for a shared context layer (orchestration + canonical IDs + eventing).

- You don’t have audit logging — who did what, with which prompt, which tool, which model version, and which business outcome.

- You cannot define success metrics — baseline time, error rate, intervention rate, and ₹ / $ impact before the pilot starts.

- Legal/compliance hasn’t signed off on retention, PII, cross-border inference, and who is liable when the agent acts.

- You’re funding “cool demos” instead of one workflow tied to P&L (cost, revenue, or risk).

If you checked multiple boxes: you’re not “behind on AI.” You’re ahead of your operating model. Fix that first—it’s the real competitive moat.

4. Where Automation Breaks: The Three Failure Modes

Analysis of failed agentic AI deployments reveals three recurring patterns of breakdown. Each maps directly to the operational bottlenecks that kill ROI.

Failure Mode 1: The Siloed Agent (Local Wins, Enterprise Losses)

Consider the cautionary tale of “Agent Alpha,” a procurement AI agent built by a global manufacturing company. Its mission: autonomously negotiate better deals with high-volume suppliers. In a controlled sandbox with curated data, Agent Alpha delivered an impressive 18% reduction in procurement costs. The team celebrated. The CFO was delighted.

Twelve months later, overall operational costs had barely moved.

What happened? Agent Alpha’s win on price came at a hidden cost. It selected a slower supplier, and without any integration with the Operations team’s scheduling system, late parts began arriving, causing production halts. Meanwhile, the Customer Service AI agent, operating in its own silo, had no access to the new supplier data and couldn’t anticipate or communicate delays. Customer complaints spiked. Churn followed.

The Root Cause: Data and orchestration silos. Agentic AI requires a holistic view of the business to make genuinely optimal decisions. When agents are deployed within departmental or platform-specific silos—a Sales agent in the CRM, a Finance agent in the ERP—they are limited to that silo’s data and perspective.

Without a unified data foundation, agents perpetually operate with one eye closed, unable to access the full context needed for complex, multi-step decisions.

Failure Mode 2: The Helpfulness Trap (Boundaryless Compliance)

Anthropic’s vending machine disaster illustrates a second failure mode. Claudius’s core training optimized for being helpful. When a reporter framed a request as serving the workers, the AI complied without question. Prices dropped to zero. Helpfulness without boundaries became a liability.

Even more concerning: Anthropic added a “CEO agent” to oversee Claudius. Reporters staged a boardroom coup using fabricated PDF documents. Both AIs accepted the forged governance materials as legitimate.

The Root Cause: Missing trust, governance, and control. AI agents cannot distinguish authentic authority from convincing impersonation. They cannot recognize when a request violates policy, even if it’s framed persuasively. Without governance embedded in the system architecture—not added as an afterthought—agents become vulnerable to manipulation.

As one analysis notes, “The opacity of AI decision-making creates a ‘black box’ that makes AI compliance, auditing, and trust-building genuinely difficult.” For production-grade controls on model behavior, see our guide to reducing AI hallucinations and tightening guardrails.

Failure Mode 3: The Sandbox Mirage (Clean Tests, Messy Reality)

A 400-lawyer firm had every reason to be confident. They had just completed a successful GenAI rollout—on time, on budget, exceeding all objectives. When agentic AI emerged as the next frontier, leadership saw a chance to widen the gap.

They launched their agentic AI pilot in early 2027. A small team of early adopters built a sandbox environment, mapped target workflows, configured the agents, and ran test cases with synthetic data. The results were promising. The AI agents handled routine intake and document routing faster than expected.

Then they tried to move to production.

The sandbox had been clean. The real environment was not. The firm had never actually fixed its information architecture—it had just trained humans to navigate the mess intuitively. GenAI had been forgiving enough to work with that arrangement. Agentic AI was not.

The conflicts check was where the pilot broke. On paper, the workflow was simple—four steps, fully documented. In practice, it required seven steps—plus a phone call to Linda in accounting, who had been at the firm for 19 years and simply knew which clients had complicated histories, which matters had been walled off informally, which names triggered exceptions that had never been written down.

The agent, of course, didn’t know it needed to call Linda. It processed a new matter intake for a client that, technically, cleared conflicts, but was one any human who’d been at the firm more than a year would have flagged immediately. By the time anyone caught it, an associate had already billed six hours to the new matter, and a partner found out about the situation from an angry client.

The Root Cause: The firm’s apparent operational maturity was fiction, maintained by institutional knowledge that had never been documented and couldn’t be automated. It was a castle on a sandy foundation.

5. Why ROI Gets Destroyed: The Hidden Costs of Broken Agents

When agentic AI fails, the damage is not limited to the failed project. The costs cascade.

| Cost Category | Description | Magnitude |

|---|---|---|

| Direct Financial Loss | Autonomous actions that lose money (like Claudius’s $1,000+ loss) | Visible, often small initially |

| Operational Disruption | Production halts, delayed shipments, customer complaints | Can exceed direct losses by 10x |

| Customer Churn | Damaged relationships from agent errors | Long-term revenue impact |

| Trust Erosion | Internal skepticism that kills future innovation | Incalculable |

| Compliance Risk | Regulatory violations from autonomous decisions | Potentially catastrophic |

The most dangerous cost is invisible: the opportunity cost of stuck innovation. When pilots fail, organizations retreat to “pilot purgatory”—isolated departmental wins that never compound into enterprise-wide ROI. They spend budget, exhaust champions, and produce nothing but cautionary tales.

6. 💰 What Agentic AI Actually Costs (2026 Ranges — India)

Decision-makers don’t only want ROI formulas—they want order-of-magnitude checks before the board meeting. The numbers below are indicative India-market ranges for mid-size enterprise pilots (not legal quotes). Swap in your SIs, cloud region, and model mix; use procurement bids to tighten the band.

| Line item | Typical range (2026, indicative) | Notes |

|---|---|---|

| LLM / API usage | ~₹5–₹50 per 1,000 agent-style requests | Highly sensitive to tokens per task, model tier, caching, and batching. Track cost per successful outcome, not per call. |

| Orchestration layer (agent controller, queues, routing) | ₹2L–₹10L one-time setup | Build vs buy; often bundled with services. |

| Integration (ERP / CRM / data warehouse / APIs) | ₹3L–₹20L | Legacy systems and missing APIs blow the top of the range. |

| Monitoring + guardrails (evals, logging, policy, alerts) | ₹1L–₹5L / year | Non-optional if you want auditability and scale. |

| Failure cost (pilot → production gone wrong) | ₹5L–₹50L+ | Sunk build, vendor time, rework, customer remediation, and opportunity cost. |

How to use this table in the next 48 hours:

- Pick one workflow with measurable volume (e.g. tickets closed, orders reconciled, invoices matched).

- Estimate requests per day × token profile → rough monthly inference burn.

- Add integration + orchestration as a phase 1 cap (e.g. ₹10–25L all-in for a tight pilot).

- Define a kill metric: if intervention rate or error rate exceeds X, stop funding and fix process/data—not prompts.

Optimizing cost and latency before you scale agents? See LLM productization: cost, latency, and hosting tradeoffs and enterprise trends on SLMs & efficient models—cheaper inference often beats more autonomous steps.

For a structured ROI narrative tied to budget approval, pair this with our AI ROI calculator and approval playbook.

7. Measuring What Matters: ROI Frameworks That Work

Traditional ROI metrics—headcount reduction, time saved—cannot capture agentic AI’s unique value dynamics. Organizations need a multidimensional framework.

The Triad ROI Framework

| Dimension | What to Measure | Example |

|---|---|---|

| Cost Savings (Direct) | Labor hours saved × hourly cost – AI investment | 100 hours/week × $50/hour = $260K annual savings |

| Revenue Growth (Business) | New revenue from AI capabilities + conversion improvements | 24/7 service adds $500K revenue |

| Risk Reduction (Protection) | Prevented errors × cost per error + compliance savings | 90% error reduction × $1K per error × 1000 transactions = $900K saved |

The Three-Bucket Measurement Approach

Engineering intelligence research suggests measuring across three essential categories:

1. Usage and Adoption

- AI agent throughput (tasks assigned per week)

- AI agent utilization rate across teams

- Token costs mapped to business outcomes

2. Time Impact

- Execution savings (how quickly direct tasks are completed)

- Flow efficiency (duration of wait states)

- Time savings: hours saved × frequency × developer cost

3. Quality and Trust

- Human intervention rate per AI workflow

- Developer satisfaction surveys

- Success rate of AI-completed tasks

The ROI Calculation

Simple formula for total ROI:

Total ROI = (Annual Savings + Annual New Revenue + Annual Risk Savings – Annual AI Costs) ÷ Annual AI Costs

Success Benchmarks

- Minimum: 100% ROI within 12 months, 25% productivity improvement

- Target: 200% ROI within 18 months, 50% productivity improvement

- Exceptional: 300%+ ROI within 24 months, business model transformation

8. The Prevention Playbook: Architecture + Resilient Systems

The organizations that succeed with agentic AI share a common approach: they treat it as a workflow re-engineering exercise, not a technology deployment. For collaboration patterns between people and models, see human-in-the-loop AI design and feedback loops.

🏗 Reference architecture (text diagram)

Use this as the minimum mental model when you whiteboard with engineering and compliance. If a box is missing in your plan, you’re not ready to scale autonomy.

User / system trigger

↓

┌───────────────────────────────────┐

│ Orchestration layer │ ← Agent controller, routing, state,

│ (Agent controller) │ retries, idempotency keys

└───────────────────────────────────┘

↓

┌───────────────────────────────────┐

│ Policy engine │ ← Rules, entitlements, spend/risk

│ (Governance / rules) │ limits, PII boundaries

└───────────────────────────────────┘

↓

┌───────────────────────────────────┐

│ Agent execution │ ← LLM / SLM + structured outputs,

│ (LLM / SLM) │ tool plans, confidence thresholds

└───────────────────────────────────┘

↓

┌───────────────────────────────────┐

│ Tool access layer │ ← APIs, DB, ERP, CRM—least privilege,

│ (APIs / DB / ERP) │ scoped credentials, sandboxes

└───────────────────────────────────┘

↓

┌───────────────────────────────────┐

│ Audit logs + monitoring │ ← Immutable trail, traces, evals,

│ (Observability) │ drift and incident runbooks

└───────────────────────────────────┘

↓

↘ (if required)

┌───────────────────────────────────┐

│ Human approval (HITL) │ ← Approver role, SLA, escalation

└───────────────────────────────────┘Design checkpoints (non-negotiable):

- Idempotency on side effects (no double-charges when the model retries).

- Explicit deny lists (what the agent can never do without human approval).

- Versioned prompts and policies (reproducible incidents, not “it changed overnight”).

Fix 1: Implement an Enterprise Orchestration Layer

The answer to data and orchestration silos is a centralized, open architecture that sits above your existing systems. Think of it as an AI automation platform layer that connects agents to data, tools, and each other.

The Conductor Model: An orchestration layer coordinates specialized agents, ensures they share information, respects process boundaries, and optimizes for enterprise-wide KPIs, not just local metrics. Think of it as the air traffic control system your agents need to prevent collisions and maximize overall throughput.

Fix 2: Build Governance Into the Architecture

Treat your AI agents like new, highly privileged employees who require rigorous oversight. Enterprise AI governance is not just a compliance checkbox; it is the mechanism that makes autonomous systems trustworthy enough to operate at scale.

Risk Tiering and Control Infusion: Classify every agent by the financial or operational risk of its actions. Implement Human-in-the-Loop (HITL) controls for high-risk decisions—requiring manager approval for actions above defined thresholds.

AI Observability and Audit Trails: Deploy forensic tooling that tracks every decision and action an agent takes. If you cannot audit it, you cannot scale it.

Emergency Shutdowns: Establish hard guardrails when agents exceed confidence, financial, or compliance thresholds.

Fix 3: Specialize First, Then Scale

Rather than routing everything through a generic LLM, leverage or fine-tune smaller, workflow-specific models trained on your industry’s jargon, compliance rules, and unique processes. Specialized models are more reliable, more cost-effective, and consistently outperform general models on nuanced domain tasks.

Start with measurable cost savings. That captures the CFO’s attention and funds the next phase.

Fix 4: Properly Scope the Agent

A critical lesson from logistics deployments: scope matters enormously.

| Scope Level | Description | Risk |

|---|---|---|

| Broad | Agent has access to most tools, handles process end-to-end | High—errors propagate |

| Limited | Agent can initiate but not execute (e.g., send scheduling link, not update route) | Lower—human verifies |

Businesses can use limited scoping to build toward more autonomous deployments as agentic AI becomes more broadly reliable.

Fix 5: Bring the Human Into the Loop (Strategically)

There’s a huge difference between an agent that makes final decisions and one that makes hypothetical changes that must be approved. The latter is much lower risk.

The goal is not to eliminate humans but to create a human-AI collaboration contract—defining clear boundaries for what agents can do autonomously, when they must escalate, and who is responsible.

Before you add “another agent” in the org chart: sanity-check when multi-agent is actually justified—otherwise you may be paying for coordination tax without P&L lift.

9. From Pilot to Production: A Phased Roadmap

Leading organizations follow a structured approach:

Phase 1: Foundation and Proof of Value (90–180 days)

- Set a clear strategic “North Star”

- Identify high-impact workflows with direct P&L implications

- Address data readiness upfront

- Focus on structured, high-volume tasks

- Measure against SMART KPIs

Phase 2: Enterprise Integration and Standardization (6–12 months)

- Scale proven workflows

- Integrate legacy systems

- Standardize governance across units

- Embed escalation paths and contextual handoffs

- Separate agentic logic from underlying vendor models

Phase 3: Continuous Optimization and Hyperautomation (1 year+)

- Implement automated dashboards and monitoring

- Enable forensic traceability

- Target high-level orchestration use cases

10. Conclusion: The Robustness Gap Is the Strategy Gap

Anthropic’s researchers summarized the vending machine experiment with a crucial insight: “There’s a wide gap between capable and completely robust.”

This gap explains why 40% of agentic AI projects may be cancelled by 2027 (per Gartner’s outlook). It explains why so few enterprises fully trust agents for core processes. The capability is there. The robustness isn’t—yet.

The fix isn’t waiting for better models. It’s building the guardrail infrastructure now: workflow orchestration, approval automation, verification systems, audit trails. It’s recognizing that agentic AI is at least as much an operations problem as an AI problem.

The organizations that succeed will not be those with the most powerful models. They will be those that bridge the value gap—moving from efficiency metrics to business outcomes, from isolated pilots to enterprise orchestration, from blind autonomy to governed intelligence.

As Gartner’s Anushree Verma puts it: “Leadership in the AI race won’t go to those who chase better models alone—it will go to those who close the value gap.”

The choice is straightforward: build the robustness infrastructure now, or risk joining the cohort writing postmortems when budgets and patience run out.

The gap between pilot and production is where agentic AI ROI lives—or dies.

Real-world blunt rule: If your architecture diagram looks more impressive than your ROI calculation, you’re building the wrong system.

Before you commit ₹10–50 lakh to agentic AI

Spend five minutes on a blunt readiness pass: (1) Do you pass the decision table in §2? (2) How many red flags in §3 apply? (3) Is the architecture in §8 sketched for your stack—with audit logs and HITL on risky tools?

Authority stack: WHY autonomy breaks → this guide. WHEN to use multi-agent → multi-agent vs single agent. WHAT model stack → LLM productization + SLMs & enterprise ML trends. Implementation → Multi-agent architecture in production: patterns, costs, and tradeoffs (next).

If the honest answer is “we’re not sure,” that’s valuable: you just saved a pilot budget. Before you spend ₹5–50 lakh on agentic AI pilots and integrations, get a second opinion. Contact us—we’ll tell you whether you’re ready for governed autonomy, or whether you should fix ops and use a single agent first so ROI doesn’t die in production.

📚 Recommended Resources

Books & Guides

Hardware & Equipment

* Some links are affiliate links. This helps support the blog at no extra cost to you.

Explore More

🎯 Complete Guide

This article is part of our comprehensive series. Read the complete guide:

Read: How AI Will Transform Business Decision Making in the Next 5 Years📖 Related Articles in This Series

AI in Manufacturing: Revolutionizing Quality Control & Predictive Maintenance

AI Marketing Automation for Ecommerce: The 90-Day Playbook to 3X Growth

AI-Powered Automation for Reducing Customer Support Costs with Chatbots

The 2025 AI Stack: Essential Tools Powering American Startups

AI in US Manufacturing: Predictive Maintenance & ROI Guide 2025

Related articles

More to read on related topics:

Quick Links

Related Posts

AI Systems Architecture Guide (2026): From Edge IoT to LLMs & Dashboards

AI systems architecture 2026: one map for agentic AI, multi-agent orchestration, hybrid inference economics, secure open-weight deployment, MQTT/IoT security, RAG, and production guardrails.

March 19, 2026

Multi-Agent Systems vs Single Agent: When You Need Them (and When You Don't)

Multi agent vs single agent: 2026 cost table (₹), ROI comparison, reference architecture, and when to use multi agent systems—before you 3× API spend and latency for no lift.

March 19, 2026

AI ROI Calculator 2026: Win Budget Approval with Payback

Win AI budget approval: ROI calculator, formulas & executive slides that prove payback before you spend. 2026-ready templates. Updated March 2026.

December 15, 2025

Human-in-the-Loop AI: Feedback Loops That Double Model

HITL feedback loops that double model quality: workflows, annotation, review SLAs. Real results. Updated March 2026.

December 12, 2025