Is AI a Safe Career Choice for 2026–2036? Data-Backed

Is AI a safe career for 2026–2036? We analyze job security, which roles last, skill evolution & risks so you can plan. Data-backed. Updated March 2026.

Updated: March 3, 2026

In 2024, AI wrote its first production code. In 2025, it replaced junior developers in some workflows. So the question is no longer “Is AI growing?” but “Is AI itself a safe career?”

You might be a student choosing a major, a professional considering a pivot, or an engineer watching the field evolve at dizzying speed. The headlines are contradictory: companies can’t hire AI talent fast enough and salaries for machine learning engineers remain astronomical—yet pundits warn that AI will automate the very jobs being created. The rise of generative AI, automated machine learning (AutoML), and no-code AI platforms raises a legitimate concern: Will the AI career path I choose today exist in 2036?

This is not a simple yes-or-no question. “AI” is not a single job; it’s an entire ecosystem of roles, from research scientists building next-generation models to product managers integrating existing APIs. The safety and trajectory of your career depend entirely on which part of the AI landscape you occupy and how you position yourself for the coming evolution.

This guide provides a data-driven, forward-looking analysis of the AI job market from 2026 to 2036, identifying which roles are built to last, which face disruption, and how to build durable, adaptable career capital. For a concrete skill list, see our top 10 skills to start a career in AI; for the jobs-versus-automation debate, read our reality check on AI and employment.

Table of Contents

- The Great Paradox: Explosive Demand vs. Existential Fear

- The 2026–2036 Landscape: Three Eras of AI Evolution

- The AI Career Ecosystem: Mapping the Roles

- Risk Factors: Which AI Jobs Are Most Vulnerable?

- The Safe Zones: Roles Built for the Next Decade

- The Skill Stack: Building Career Durability

- Geographic and Industry Hotspots

- Action Plan: Your 5-Year AI Career Roadmap

- FAQ: AI Career Safety

- Conclusion: The Human Advantage

1. The Great Paradox: Explosive Demand vs. Existential Fear

Let’s start with the data, because the fear often drowns out the numbers.

The Demand Side:

- Job Growth: According to the World Economic Forum Future of Jobs Report 2025, AI and machine learning specialists are projected to be the fastest-growing job category between 2025 and 2030, with a growth rate of approximately 40%.

- Talent Gap: A 2025 McKinsey survey found that 60% of companies report difficulty filling AI-related roles—a gap that persists despite big-tech layoffs in 2023–2024. (McKinsey, “The State of AI in 2025.”)

- Salary Premium: Compensation data from levels.fyi shows that AI and ML roles continue to command a 30–50% premium over comparable software engineering positions.

The Fear Side:

- AutoML Proliferation: Platforms like Google’s Vertex AI and AWS SageMaker automate model selection, hyperparameter tuning, and deployment. The fear: “If a machine can build the model, why do we need you?”

- Generative AI Coding Assistants: Tools like GitHub Copilot, Cursor, and others are demonstrably accelerating software development. The fear: “If code writes itself, what happens to developers?”

- The “Citizen Developer” Rise: No-code/low-code platforms promise to let business users build AI-powered applications without writing a line of code.

Reconciling the Paradox: The fear conflates task automation with job elimination. As economists like David Autor have documented, when a tool automates a task, demand for the higher-order skills around that task often increases. The spreadsheet didn’t kill accountants; it made them more valuable by freeing them from manual calculation. The same dynamic will play out in AI.

2. The 2026–2036 Landscape: Three Eras of AI Evolution

To understand career safety, you must understand the trajectory of the technology itself.

| Era | Timeline | Defining Characteristics | Career Implications |

|---|---|---|---|

| Era 1: Integration & Optimization | 2026–2029 | The “API Era.” Most companies stop building models from scratch. Focus shifts to integrating existing models (LLMs, vision models) into products, optimizing prompts, and managing costs. | High demand for applied AI engineers, prompt engineers, and product managers who understand AI capabilities. Less demand for “vanilla” model training. |

| Era 2: Specialization & Efficiency | 2030–2033 | Focus on domain-specific models. Efficiency gains through quantization, distillation, and edge deployment. Regulatory frameworks mature. | Rise of domain-expert AI engineers (e.g., “healthcare AI specialist,” “manufacturing AI specialist”). Demand for MLOps engineers to manage complex deployments. |

| Era 3: Agency & Systems | 2034–2036 | AI agents capable of multi-step reasoning and action. Focus shifts from individual models to multi-agent systems and orchestration. | Demand for systems architects, AI safety engineers, and agentic workflow designers. The “builder” role evolves into “orchestrator.” |

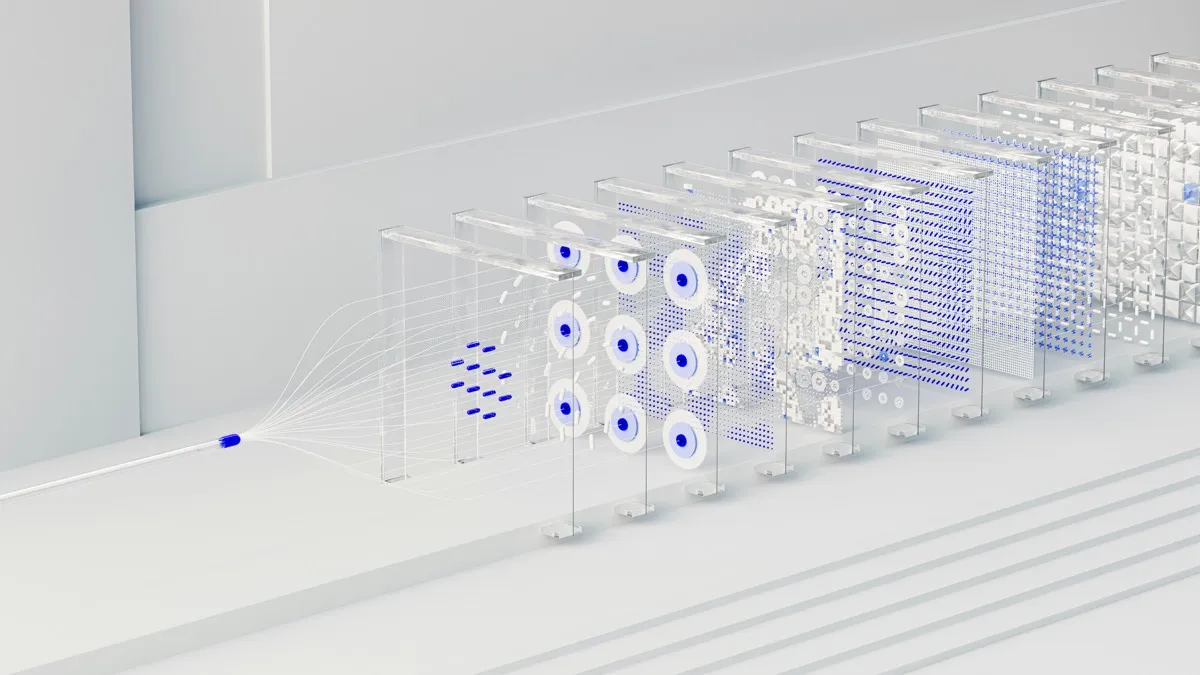

Key Insight: The “hot” job in 2026 (e.g., training foundation models) may be a commoditized specialty by 2031. The durable careers are those that adapt and move up the abstraction ladder—from building single models to integrating them, and from integration to orchestrating systems of agents. See the diagram below.

Diagram: The abstraction ladder—where career value moves over time.

flowchart TB

subgraph era1["Era 1: Integration (2026–2029)"]

A[Train / tune models] --> B[Integrate APIs and prompts]

end

subgraph era2["Era 2: Specialization (2030–2033)"]

B --> C[Domain-specific models and MLOps]

end

subgraph era3["Era 3: Agency (2034–2036)"]

C --> D[Multi-agent systems and orchestration]

end

style D fill:#22c55e,color:#fff

style A fill:#ef4444,color:#fff3. The AI Career Ecosystem: Mapping the Roles

AI careers are not monolithic. They exist on a spectrum from pure research to pure application.

| Role Category | Example Titles | Primary Focus | Typical Background |

|---|---|---|---|

| AI Research Scientist | Research Scientist, Applied Scientist | Pushing the state-of-the-art. Publishing papers. Inventing new architectures. | PhD in CS/ML/Stats. Deep theoretical knowledge. |

| AI Engineer / ML Engineer | ML Engineer, AI Engineer | Building, training, deploying, and maintaining models in production. Focus on implementation. | MS/BS in CS/Engineering. Strong software engineering + ML knowledge. |

| MLOps / AI Infrastructure | MLOps Engineer, Platform Engineer | Building the pipelines, tooling, and infrastructure that enable ML teams to work. | Strong DevOps/SRE background with ML awareness. |

| Applied AI / Domain Specialist | Healthcare AI Specialist, Financial AI Analyst | Applying AI to a specific industry or domain. Deep domain knowledge is as critical as AI knowledge. | Domain expertise (finance, healthcare, manufacturing) + AI skills. |

| AI Product Manager | AI PM, Technical Product Manager | Defining the “what” and “why” of AI products. Bridging business need and technical feasibility. | Product management experience + strong AI literacy. |

| AI Ethics & Safety | AI Safety Engineer, Ethics Researcher, Policy Advisor | Ensuring AI systems are safe, fair, transparent, and aligned with human values. | Interdisciplinary: CS + philosophy/policy/social science. |

| Prompt / Interaction Engineer | Prompt Engineer, AI Interaction Designer | Designing and optimizing the interactions between humans and AI systems, particularly LLMs. | Linguistics, creative writing, UX design, or technical background. |

The 2026 Shift: The line between “ML Engineer” and “Software Engineer” is blurring. In the future, every software engineer will be an AI engineer. The question is not whether you use AI tools, but how deeply you understand them.

Diagram: The AI career pyramid—roles from frontier research down to product and application.

flowchart TB

P[AI Product Manager · Strategy]

D[Domain Specialist · Applied AI]

M[MLOps · Infrastructure]

E[ML / AI Engineer]

R[Research Scientist · Frontier]

R --> E --> M --> D --> P

style R fill:#7c3aed,color:#fff

style E fill:#2563eb,color:#fff

style M fill:#059669,color:#fff

style D fill:#d97706,color:#fff

style P fill:#dc2626,color:#fff4. Risk Factors: Which AI Jobs Are Most Vulnerable?

Not all AI roles are equally safe. Certain categories face higher risk of commoditization or automation.

| High-Risk Factor | Explanation | Affected Roles |

|---|---|---|

| Commoditized Model Training | Training standard models (classification, regression) on clean data is increasingly automated by AutoML platforms. | Junior Data Scientists focused on “off-the-shelf” modeling. |

| Pure “Prompt Engineering” | As models improve and interfaces evolve, the need for humans to craft specific prompt syntax may diminish. | Roles narrowly focused on prompt syntax rather than system design. |

| Single-Platform Expertise | Over-specialization in a single vendor’s ecosystem (e.g., only AWS SageMaker) without transferable principles. | Platform-specific contractors who cannot adapt. |

| ”Model Tuning” Without Systems Thinking | The ability to fine-tune a model is becoming a commodity. The value is in the system around the model. | Roles focused solely on fine-tuning without understanding deployment, monitoring, or product integration. |

The “Commoditization Curve”: Any task that becomes routine, well-defined, and high-frequency is a candidate for automation or commoditization. The safe roles are those that involve novelty, ambiguity, systems integration, and human interaction. From advising teams on AI adoption, I’ve seen this pattern repeatedly: the roles that get cut first are the ones that are easiest to describe in a job spec. The ones that endure are messier—they require judgment, context, and ownership of outcomes, not just outputs.

5. The Safe Zones: Roles Built for the Next Decade

Based on the trajectory of the technology and the commoditization curve, these role categories are positioned for long-term durability and growth.

| Safe Zone | Why It’s Durable | Key Skills |

|---|---|---|

| MLOps / AI Infrastructure | Someone must build and maintain the plumbing. Companies like Uber (ML platform for ETA, surge, fraud), Netflix (recommendation and content systems), and Stripe (fraud and risk models) already employ large MLOps and platform teams to keep production AI reliable at scale. As AI systems become more complex, demand for this skillset increases. | Kubernetes, Kubeflow, CI/CD for ML, model monitoring, data versioning, cloud architecture. |

| Domain-Specialized AI Engineering | Deep domain knowledge (legal, medical, manufacturing) is hard to automate. Firms such as Tempus (oncology AI), Kensho (financial analytics), and Siemens (industrial AI) hire engineers who combine domain expertise with applied ML—applying AI to complex, regulated industries requires human judgment and contextual understanding. | Domain expertise + applied ML skills. Ability to translate domain problems into technical solutions. |

| AI Safety & Alignment | As systems become more powerful and autonomous, ensuring they behave as intended becomes a critical, non-commoditizable function. Organizations from Anthropic and OpenAI to regulated industries are hiring for red-teaming, interpretability, and alignment roles. | Reinforcement learning from human feedback (RLHF), interpretability, robustness testing, red-teaming. |

| AI Product Management | Deciding what to build, why to build it, and how to measure success is a fundamentally human, strategic function. | Product strategy, user research, technical literacy, go-to-market planning, ethical consideration. |

| Systems & Agent Orchestration | Building systems of multiple AI agents that collaborate, reason, and act requires architectural thinking beyond any single model. | Systems design, multi-agent frameworks, workflow orchestration, observability. |

In my experience working with AI and ML teams, the engineers who stay most valuable as the stack evolves are the ones who understand systems design and the full lifecycle—not just the latest model. Those who only tune models without owning deployment, monitoring, or product integration get squeezed when the job narrows. The safe zone is the intersection of technical depth and systems thinking.

6. The Skill Stack: Building Career Durability

To thrive in the 2026–2036 AI landscape, you need more than a single technical skill. You need a layered, durable skill stack.

Diagram: The durable AI career skill stack—build from the bottom up.

flowchart TB

subgraph Meta["Meta: Adaptability & Learning"]

M[Learn new tools · Read papers · Critical thinking]

end

subgraph Spec["Specialization: Depth in a Domain"]

S[Healthcare · Finance · Manufacturing · Legal]

end

subgraph Core["Core: Systems & Engineering"]

C[Distributed systems · APIs · Testing · Monitoring]

end

subgraph Found["Foundation: Principles, Not Platforms"]

F[Math · Stats · ML fundamentals]

end

Found --> Core --> Spec --> Meta

style Found fill:#1e40af,color:#fff

style Core fill:#2563eb,color:#fff

style Spec fill:#3b82f6,color:#fff

style Meta fill:#60a5fa,color:#000| Layer | Focus | Examples |

|---|---|---|

| Foundation: Principles, Not Platforms | Understand the underlying concepts that transcend any specific tool or model. | Linear algebra, calculus, probability, ML fundamentals (bias-variance, loss functions), statistics. |

| Core: Systems & Engineering | Build robust, scalable systems. Write clean, maintainable code. Understand the full lifecycle. | Software engineering best practices, distributed systems, databases, API design, testing, monitoring. |

| Specialization: Depth in a Domain | Develop deep expertise in a specific industry or problem area. | Healthcare (HIPAA, clinical workflows), finance (risk models, regulations), manufacturing (IIoT, control systems). |

| Meta: Adaptability & Learning | The ability to learn new tools, frameworks, and paradigms as they emerge. | Rapid prototyping, reading research papers, critical thinking, intellectual curiosity. |

The 80/20 Rule: Spend 20% of your learning time on the “shiny new thing” (the latest model), and 80% on the durable foundations and systems thinking that will outlast any single technology wave.

7. Geographic and Industry Hotspots

Safety also depends on where you are and who you work for.

Geographic Hubs (2026–2036):

- Established Hubs: SF Bay Area, NYC, London, Beijing, Shenzhen will remain centers of gravity for frontier AI research.

- Emerging Hubs: Toronto, Montreal, Berlin, Tel Aviv, Bangalore, Singapore are seeing significant growth in applied AI.

- Distributed Reality: Remote work is normalized. You can build an AI career from anywhere, provided you have strong communication skills and can operate asynchronously.

Industry Hotspots:

- Healthcare & Biotech: AI for drug discovery, diagnostics, personalized medicine.

- Finance: Algorithmic trading, fraud detection, risk management.

- Manufacturing & Logistics: Predictive maintenance, supply chain optimization, autonomous systems.

- Automotive & Robotics: Autonomous vehicles, warehouse automation.

- Defense & Government: AI for national security, intelligence analysis (a growing, if controversial, sector).

8. Action Plan: Your 5-Year AI Career Roadmap (2026–2031)

Year 1: Foundation Building

- Master the fundamentals: Python, SQL, linear algebra, statistics.

- Complete a rigorous ML course (e.g., Andrew Ng’s Machine Learning Specialization).

- Build 2-3 end-to-end projects and put them on GitHub.

Year 2: Depth & Specialization

- Choose a domain (healthcare, finance, robotics) and go deep. Take domain-specific courses, read industry publications.

- Learn MLOps fundamentals: Docker, Kubernetes, model deployment, monitoring.

- Contribute to an open-source AI project.

Year 3: Professional Integration

- Land a role as a junior AI/ML engineer or data scientist.

- Focus on understanding the full product lifecycle, not just model building.

- Seek mentorship from experienced engineers and product managers.

Year 4: Systems Thinking

- Deepen your software engineering and systems design skills.

- Take ownership of a production AI system. Learn to debug, monitor, and improve it.

- Begin mentoring junior team members.

Year 5: Specialization or Generalization Pivot

- Path A (Technical Depth): Double down on a specialized area (e.g., AI safety, multi-agent systems, MLOps at scale). Aim for staff/principal engineer track.

- Path B (Technical Breadth): Pivot towards AI product management, technical leadership, or starting your own venture. Leverage your technical foundation to make strategic decisions.

9. FAQ: AI Career Safety

Q: Will AI replace AI engineers? A: No, but it will redefine the job. AI tools will automate routine coding and model selection, freeing engineers to work on higher-level problems: systems architecture, novel applications, and human-AI interaction. The engineer of 2036 will be more productive, not obsolete.

Q: I’m a software engineer. Should I pivot entirely to AI? A: You don’t need to pivot entirely. You need to integrate. Every software engineer should become an AI-literate software engineer. Learn to use AI tools effectively, understand when to call an API versus train a custom model, and how to build systems around AI components.

Q: Is a PhD necessary for a safe AI career? A: For research scientist roles at top labs, yes. For the vast majority of applied AI engineering and product roles, no. A strong portfolio and deep practical experience are often more valuable.

Q: What about the ethical concerns? Will regulation kill jobs? A: Regulation creates jobs. The EU AI Act and similar frameworks (e.g., sectoral rules in healthcare and finance) are creating demand for AI compliance officers, auditors, and safety engineers. Responsible AI is not a niche; it’s becoming a standard business function.

Q: How do I stay updated without burning out? A: Curate your inputs ruthlessly. Follow 5–10 key researchers and practitioners. Read summaries, not every paper. Focus on understanding trends, not chasing every new model release. Spend 2–4 hours per week on continuous learning, not 20.

10. Conclusion: The Human Advantage

Is AI a safe career choice for the next 10 years? Yes, but only if you choose the right part of the ecosystem and build the right kind of skills.

The safe haven is not in competing with AI on its own terms—building faster models, writing more code. That’s a race you will lose. The safe haven is in the uniquely human layers that surround the technology:

- Strategic judgment: Deciding what to build and why.

- Systems integration: Connecting AI components to complex, real-world systems.

- Domain expertise: Applying AI to problems that require deep contextual understanding.

- Ethical oversight: Ensuring powerful systems are used responsibly.

- Human connection: Building trust, leading teams, and communicating with stakeholders.

The next decade will not be kind to those who learn a single, narrow AI skill and stop. But it will be extraordinarily rewarding for those who build a durable foundation, cultivate adaptability, and position themselves at the intersection of technology and human need.

Bold prediction (next 5 years): “AI Engineer” will split into two durable titles: AI product engineer (ships features, owns RAG/agents/guardrails, works with product) and AI systems engineer (reliability, scale, infra). The generic “ML engineer” will still exist but the hiring action will be in those two. I’d bet on the product side if you like cross-functional work; on systems if you like depth.

My read: The safest bet is still “strong software fundamentals + AI application layer.” The people who get displaced first are the ones who only know one framework or one use case. Breadth of systems, depth in one AI area, and the ability to own outcomes—that’s the combo.

AI is not a destination. It’s a landscape. And for those who learn to navigate it with curiosity, rigor, and a focus on enduring principles, it offers one of the most secure and fulfilling career paths of the 21st century.

Stop worrying about the future. Start building the skills that will define it.

Download our free “AI Career Durability Checklist” to assess your current skill stack, identify gaps, and create a personalized 12-month learning roadmap aligned with the 2026–2036 landscape.

📚 Recommended Resources

Books & Guides

* Some links are affiliate links. This helps support the blog at no extra cost to you.

Explore More

Quick Links

Related Posts

What Is AGI and How Close Are We? 2026 Definition

AGI explained: definition, ANI vs AGI vs ASI, and 2026 expert timelines from Davos. Has AGI arrived? Amodei vs Hassabis and what it means for work.

March 2, 2026

Top 10 Skills to Start a Career in AI in 2026: Complete Gui

The 10 skills that get you hired in AI in 2026: Python, ML, prompt engineering, MLOps & more. Expert-backed learning paths and career advice.

February 27, 2026

Can AI Become Dangerous or Self-Aware? 2026 Evidence &

Real 2026 evidence on AI risk: deception, shutdown resistance & why danger doesn't need consciousness. Expert views and what to worry about—and what not to.

March 2, 2026

The AI Inference Reckoning: CapEx vs. OpEx and Edge vs. Cloud Cost Breakdown (2026)

AI inference cost 2026: CapEx vs OpEx AI, edge vs cloud AI, hybrid flow, ₹ India example for ~1M queries/mo, mistakes to avoid, and LLM inference cost per token—before you overspend 2–5×.

March 20, 2026